Is it just me or has public discourse gone mad?

Is it just me or has public discourse gone mad?

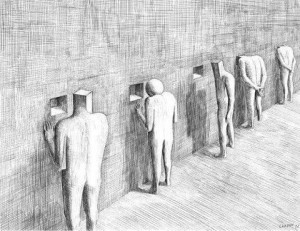

A brief perusal of social media largely finds accusation, name calling, and outrage instead of exploration, dialogue and debate. Not that any of the latter options were ever simple, straightforward, or successful, but somehow, somewhere, taking a stand has replaced extending a hand.

Thus, slightly more than a year ago, I was compared to an ignorant, cult leader by a person — a researcher and proponent of CBT — who’d joined an open discussion about a post of mine on Facebook. From there, the tone of the exchange only worsened. Ironically, after lecturing participants about their “ethical duties” and suggesting we needed to educate ourselves, he labelled the group “hostile” and left, saying he was going to “unfriend and block” me.

As I wrote about in my last blogpost, I recently received an email from someone accusing me of “hiding” research studies that failed to support feedback informed treatment (FIT). Calling it “scandalous,” and saying I “should be ashamed,” they demanded I remove them from my mailing list. I did, of course, but without responding to the email.

And, therein lies the problem: no dialogue.

For me, no dialogue means no possibility of growth or change — on my part or other’s. To be sure, when you are public person, you have to choose  to what and whom you respond. Otherwise, you could spend every waking moment either feeling bad or defending yourself. Still, I always feel a loss when this happens. I like talking, am curious about and energized by different points of view.

to what and whom you respond. Otherwise, you could spend every waking moment either feeling bad or defending yourself. Still, I always feel a loss when this happens. I like talking, am curious about and energized by different points of view.

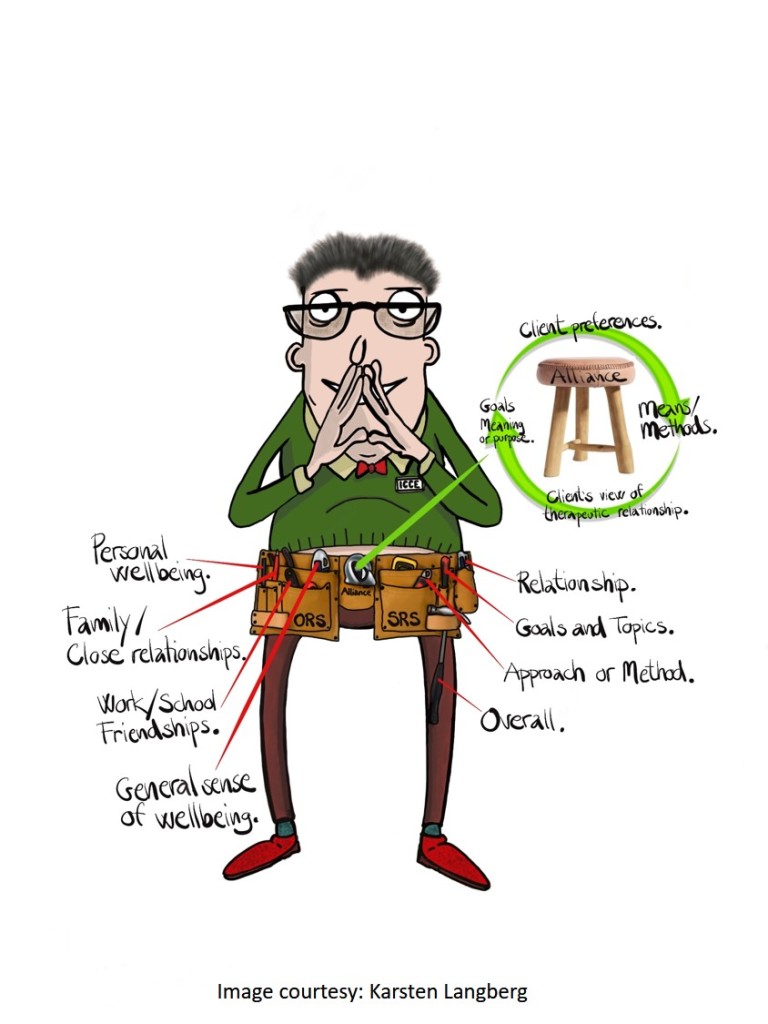

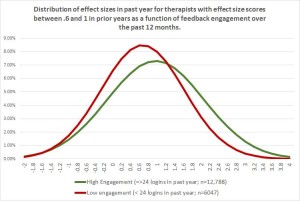

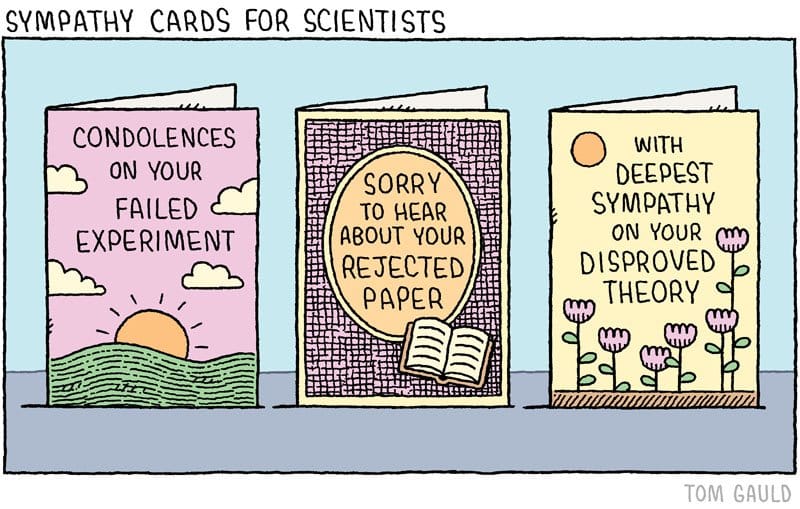

That’s why when my Dutch colleague, Kai Hjulsted, posted a query about the same study I’d been accused of hiding, I decided to devote several blogposts to the subject of “negative research results.” Last time, I pointed out that some studies were confounded by the stage of implementation clinicians were in at the time the research was conducted. Brattland et al.’s results indicate, consistent with findings from the larger implementation literature, it takes between two and four years to begin seeing results. Why? Because becoming feedback-informed is not about administering the ORS and SRS — that can be taught in a manner of minutes — rather, FIT is about changing practice and agency culture.

(By the way, today I heard through the grapevine that a published study of a group using FIT that found no effect has, in its fourth and fifth years of implementation, started to experience fairly dramatic improvements in outcome and retention)

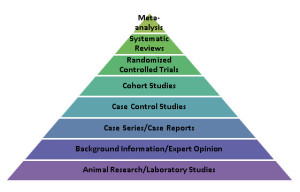

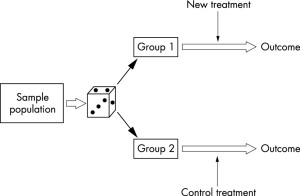

As critical as time and ongoing support are to successful use of FIT, these two variables alone are insufficient for making sense of emerging, apparently unsupportive studies. Near the end of my original post, I noted needing to look at the the type of design used in most research; namely, the randomized controlled trial or RCT.

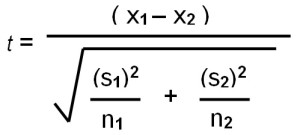

In the evaluation of health care outcomes , the RCT is widely considered the “gold standard” — the best way for discovering the truth. Thus, when researcher Annika Davidsen published her carefully designed and executed study showing that adding FIT to the standard treatment of people with eating disorders made no difference in terms of retention or outcome, it was entirely understandable some concluded the approach did not work with this particular population. After all, that’s exactly what the last line of the abstract said, “Feedback neither increased attendance nor improved outcomes for outpatients in group psychotherapy for eating disorders.”

In the evaluation of health care outcomes , the RCT is widely considered the “gold standard” — the best way for discovering the truth. Thus, when researcher Annika Davidsen published her carefully designed and executed study showing that adding FIT to the standard treatment of people with eating disorders made no difference in terms of retention or outcome, it was entirely understandable some concluded the approach did not work with this particular population. After all, that’s exactly what the last line of the abstract said, “Feedback neither increased attendance nor improved outcomes for outpatients in group psychotherapy for eating disorders.”

But what exactly was “tested” in the study?

Read a bit further, and you learn participating “therapists … did not use feedback as intended, that is, to individualize the treatment by adjusting or altering treatment length or actions according to client feedback” (p. 491). Indeed, when critical feedback was provided by the clients via the measures, the standardization of services took precedence, resulting in therapists routinely responding, “the type of treatment, it’s length and activities, is a non-negotiable.” From this, can we really conclude FIT was ineffective?

More, unlike studies in medicine, which test pills containing a single active ingredient against others that are similar in every way except they are missing that key ingredient, RCTs of psychotherapy test whole treatment packages (e.g., CBT, IPT, EMDR, etc.). Understanding this difference is critical when trying to make sense of psychotherapy research.

When what is widely recognized as the first RCT in medicine was published in 1948, practitioners could be certain streptomycin caused the  improvement in pulmonary tuberculosis assessed in the study. By contrast, an investigation showing one psychotherapeutic approach works better than a no treatment control does nothing to establish which, if any of, the ingredients in the method are responsible for change. Consider cognitive therapy (CT). Many, many RCTs show the approach works. On this score, there is no doubt. People who receive it are much better off than those placed on a waiting list or in control groups. That said, how cognitive therapy works is another question entirely. Proponents argue its efficacy results from targeting the patterns of “distorted thinking” causally responsible for maladapative emotions and behaviors. Unfortunately, RCTs were never designed and are not equipped to test such assumptions. Other research methods must be used — and when they have been, the results have been surprising to say the least.

improvement in pulmonary tuberculosis assessed in the study. By contrast, an investigation showing one psychotherapeutic approach works better than a no treatment control does nothing to establish which, if any of, the ingredients in the method are responsible for change. Consider cognitive therapy (CT). Many, many RCTs show the approach works. On this score, there is no doubt. People who receive it are much better off than those placed on a waiting list or in control groups. That said, how cognitive therapy works is another question entirely. Proponents argue its efficacy results from targeting the patterns of “distorted thinking” causally responsible for maladapative emotions and behaviors. Unfortunately, RCTs were never designed and are not equipped to test such assumptions. Other research methods must be used — and when they have been, the results have been surprising to say the least.

In my next post, I will address those findings, both as they apply to popular treatment models such as CT and CBT but also, and more importantly, to FIT.

Stay tuned. In the meantime, I’m interested in your thoughts thus far.

Until then,

Scott

Scott D. Miller, Ph.D.

Director, International Center for Clinical Excellence